Opportunity space

Mathematics for Safe AI

Mathematics for Safe AI

We don’t yet have known technical solutions to ensure that powerful AI systems interact as intended with real-world systems and populations. A combination of scientific world-models and mathematical proofs may be the answer to ensuring AI provides transformational benefit without harm.

What if we could use advanced AI to drastically improve our ability to model and control everything from the electricity grid to our immune systems?

Defined by our Programme Directors (PDs), opportunity spaces are areas we believe are likely to yield breakthroughs.

In Mathematics for Safe AI, we are exploring how to leverage mathematics and scientific modelling to advance transformative AI and provide a basis for provable safety.

Beliefs

The core beliefs that underpin this opportunity space:

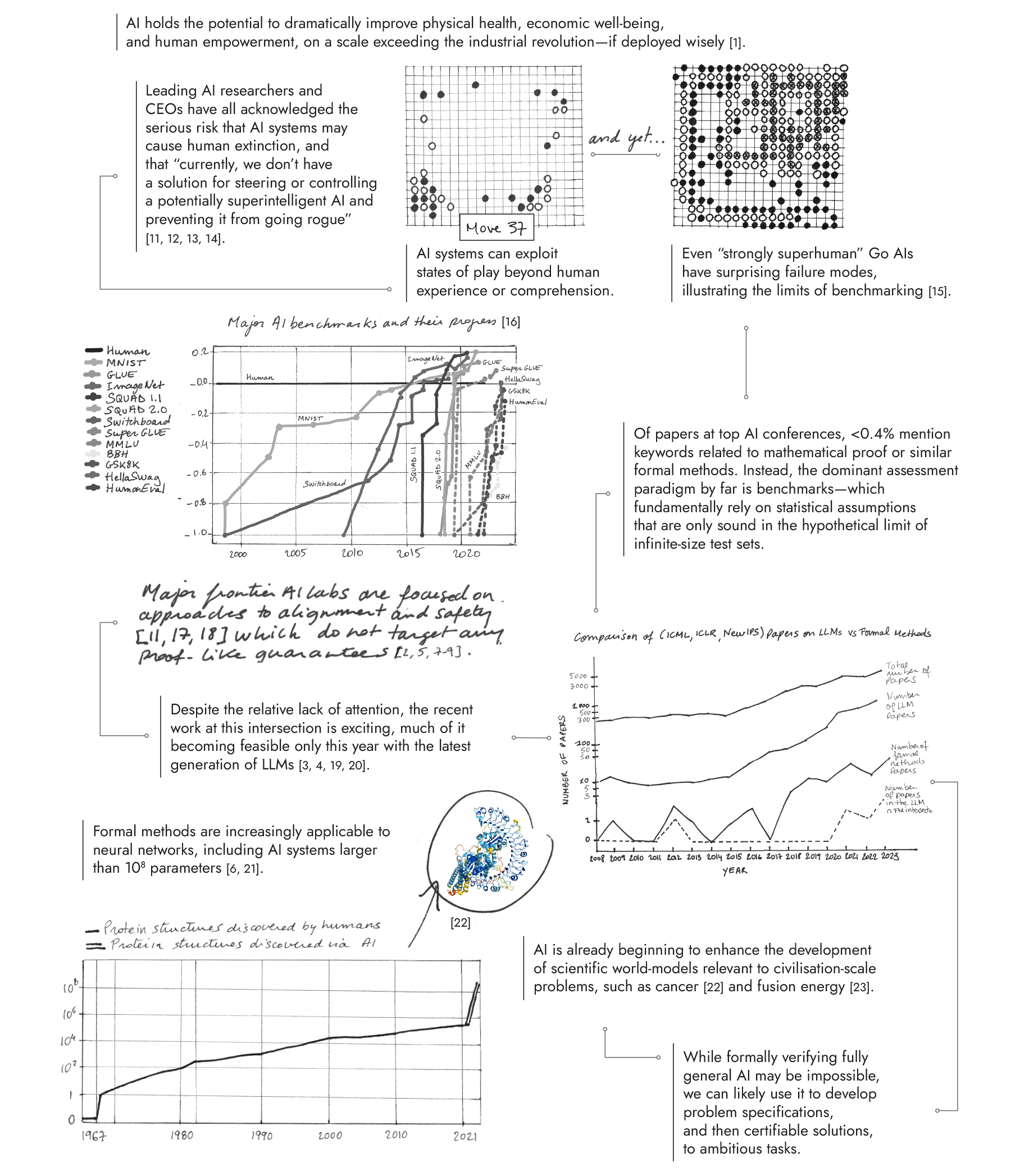

Future AI systems will be powerful enough to transformatively enhance or threaten human civilisation at a global scale —> we need as-yet-unproven technologies to certify that cyber-physical AI systems will deliver intended benefits while avoiding harms.

Given the potential of AI systems to anticipate and exploit world-states beyond human experience or comprehension, traditional methods of empirical testing will be insufficiently reliable for certification —> mathematical proof offers a critical but underexplored foundation for robust verification of AI.

It will eventually be possible to build mathematically robust, human-auditable models that comprehensively capture the physical phenomena and social affordances that underpin human flourishing —> we should begin developing such world models today to advance transformative AI and provide a basis for provable safety

Programme: Safeguarded AI

To build a programme within an opportunity space, our Programme Directors direct the review, selection, and funding of a portfolio of projects.

Backed by £59m, the programme is building a mathematical assurance toolkit that lets fleets of AI agents produce formally verified artifacts at unprecedented speed and scale.

Rather than relying on human reviewers to evaluate every AI output directly, this approach enables scalable oversight through mathematical proof: overseers specify requirements, and trust in the results ground out in the mathematics itself.