Our goal

AI agents can now write software at a pace that far exceeds human engineering capabilities. Our ability to verify whether that software is correct has not kept up. This gap is evident in software engineering today, but it will become equally pressing in a growing number of domains of science, engineering and decision making more broadly.

This programme aims to close that gap, enabling us to safely deploy AI to transform domains where trust, correctness, and fail-safety are essential.

To advance mathematical modelling and formal verification, we are building and testing an open-source assurance toolkit that lets fleets of AI agents produce formally verified artefacts at unprecedented speed and scale.

Our goal is to equip AI-produced code, control systems, and models with quantitative guarantees of correctness and fail-safety. We will demonstrate these capabilities in one or several critical cyber and cyber-physical domains, such as secure software and hardware, energy grid balancing, supply chain management, and clinical trial design.

If we succeed, we will be able to use AI safely in domains that currently require human-in-the-loop oversight, while simultaneously strengthening resilience – moving faster, across larger systems, and with stronger guarantees than human teams alone can provide.

We have updated our thesis (as of May 2026) to reflect the programme’s sharper focus: expanding the ambition and scope of TA1 ‘Tooling’, and applying these capabilities to high-impact cybersecurity challenges.

This pivot responds to the rapid advance of frontier AI capabilities and positions the programme where it can deliver the greatest impact: building a broader mathematical assurance toolkit and demonstrating its value in cybersecurity. You can access the original thesis in our programme updates below.

Funding open until 1 July 2026

As part of Technical Area 2, this funding call pursues the question: given the advances in AI and formal methods, what are the most ambitious, security-critical systems we can verify today?

To answer this question we are looking to fund teams that fall into one of two tracks. Track 1, building and verifying security-critical components, and Track 2, adversarial evaluation.

Technical areas

This programme is split into two technical areas (TAs), each with its own distinct objectives.

Tooling

We can build an open-source mathematical assurance toolkit that lets fleets of AI agents produce formally verified artifacts at unprecedented speed and scale.

Applications

AI-enabled formal methods can deliver high-impact efficiency and security gains when applied to real-world cybersecurity challenges.

Meet the programme team

Our Programme Directors are supported by a core team that provides a blend of operational coordination and highly specialised technical expertise.

Creator spotlight: Improving the biopharmaceutical supply chain

Pharmaceutical supply chains are a cyber-physical operating context where AI could unlock transformative improvements, but safe deployment is critical. The SAILS (Safeguarded AI for Logistics and Supply Chain Management) team is researching how AI can make pharmaceutical supply chains more resilient and effective.

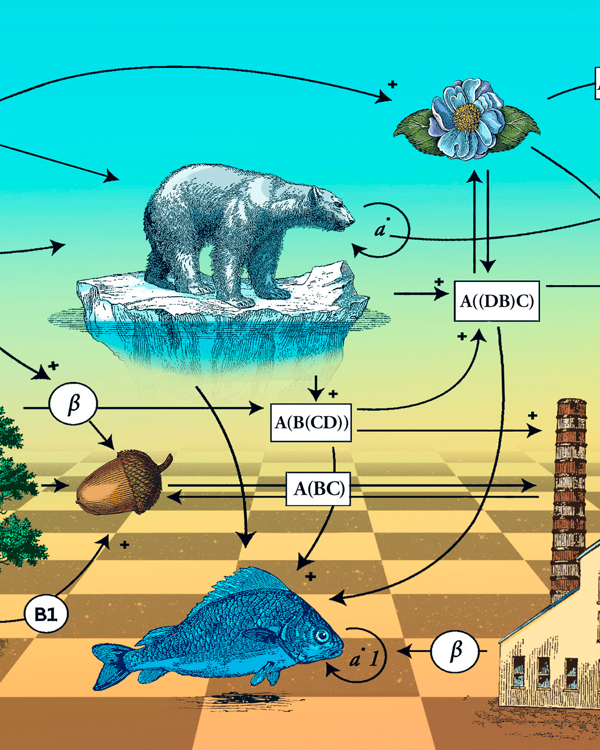

Led by Dei Vilkinsons and Ciaran Morinan, CEO and CTO of start-up HASH, they conducted interviews with more than 80 biopharmaceutical supply chain professionals, built a library and mathematical models of supply chain processes, and identified and validated the most promising applications of Safeguarded AI. They are actively working with industrial partners to build, test, and scale trusted and valued AI solutions that can reduce costs and deliver more drugs on time.

Programme updates

Featured insights

Can the most abstract math make the world a better place?

Quanta Magazine

Natalie Wolchover explores whether applied category theory can be “green” math.

Sign up for updates

Stay up-to-date on our opportunity spaces and programmes, be the first to know about our funding calls and get the latest news from ARIA.